Generative AI Audit

Generative AI is driving rapid progress across the technology landscape, enabling platforms such as OpenAI’s ChatGPT, Amazon Bedrock, and a growing number of innovative services. Although these tools are reshaping human–machine interaction, they also raise complex privacy concerns—particularly around the handling and possible exposure of Personally Identifiable Information (PII).

This article explores the implications of auditing generative AI environments, highlighting key privacy risks and practical strategies to strengthen auditing practices and overall data protection controls.

The Expanding World of Generative AI Audit

Generative AI has transcended beyond a single platform. Today, we see a diverse ecosystem:

- OpenAI’s ChatGPT: A conversational AI that has become synonymous with generative capabilities.

- Amazon Bedrock: A fully managed service allowing easy integration of foundation models into applications.

- Google’s Bard: An experimental conversational AI service powered by LaMDA.

- Microsoft’s Azure OpenAI Service: Providing access to OpenAI’s models with the added security and enterprise features of Azure.

These platforms provide API access for developers and web-based interfaces for users. This greatly increases the risk of data breaches.

Privacy Risks in the Generative AI Landscape

The widespread adoption of generative AI introduces several privacy concerns:

- Data Retention: AI models may store inputs for improvement, potentially including sensitive information.

- Unintended Information Disclosure: Users might accidentally reveal PII during interactions.

- Model Exploitation: Sophisticated attacks could potentially extract training data from models.

- Cross-Platform Data Aggregation: Using multiple AI services could lead to comprehensive user profiles.

- API Vulnerabilities: Insecure API implementations might expose user data.

General Strategies for Mitigating Privacy Risks

To address these concerns, organizations should consider the following approaches:

- Data Minimization: Limit the amount of personal data processed by AI systems.

- Anonymization and Pseudonymization: Transform data to remove or obscure identifying information.

- Encryption: Implement strong encryption for data in transit and at rest.

- Access Controls: Strictly manage who can access AI systems and stored data.

- Regular Security Audits: Conduct thorough reviews of AI systems and their data handling practices.

- User Education: Inform users about the risks and best practices when interacting with AI.

- Compliance Frameworks: Align AI usage with regulations like GDPR, CCPA, and industry-specific standards.

Auditing Generative AI Interactions: Key Aspects

Effective auditing is crucial for maintaining security and compliance. Key aspects include:

- Comprehensive Logging: Record all interactions, including user inputs and AI responses.

- Real-time Monitoring: Implement systems to detect and alert on potential privacy breaches immediately.

- Pattern Analysis: Use machine learning to identify unusual usage patterns that might indicate misuse.

- Periodic Reviews: Regularly examine logs and usage patterns to ensure compliance and identify potential risks.

- Third-party Audits: Engage external experts to provide unbiased assessments of your AI usage and security measures.

DataSunrise: A Comprehensive Solution for AI Auditing

DataSunrise offers a robust solution for auditing generative AI interactions across various platforms. Our system integrates seamlessly with different AI services, providing a unified approach to security and compliance.

Key Components of DataSunrise’s AI Audit Solution:

- Proxy Service: Intercepts and analyzes traffic between users and AI platforms.

- Data Discovery: Automatically identifies and classifies sensitive information in AI interactions.

- Real-time Monitoring: Provides immediate alerts on potential privacy violations.

- Audit Logging: Creates detailed, tamper-proof logs of all AI interactions.

- Compliance Reporting: Generates reports tailored to various regulatory requirements.

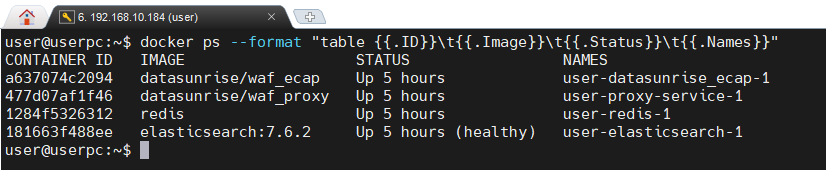

The image below shows four Docker containers running. These containers are providing DataSunrise Web Application Firewall functionality, enhancing the security of the depicted system.

Example Setup with DataSunrise

A typical DataSunrise deployment for AI auditing might include:

- DataSunrise Proxy: Deployed as a reverse proxy in front of AI services.

- Redis: For caching and session management, improving performance.

- Elasticsearch: For efficient storage and retrieval of audit logs.

- Kibana: For visualizing audit data and creating custom dashboards.

- DataSunrise Management Console: For configuring policies and viewing reports.

This setup can be easily deployed using container orchestration tools like Docker and Kubernetes, ensuring scalability and ease of management.

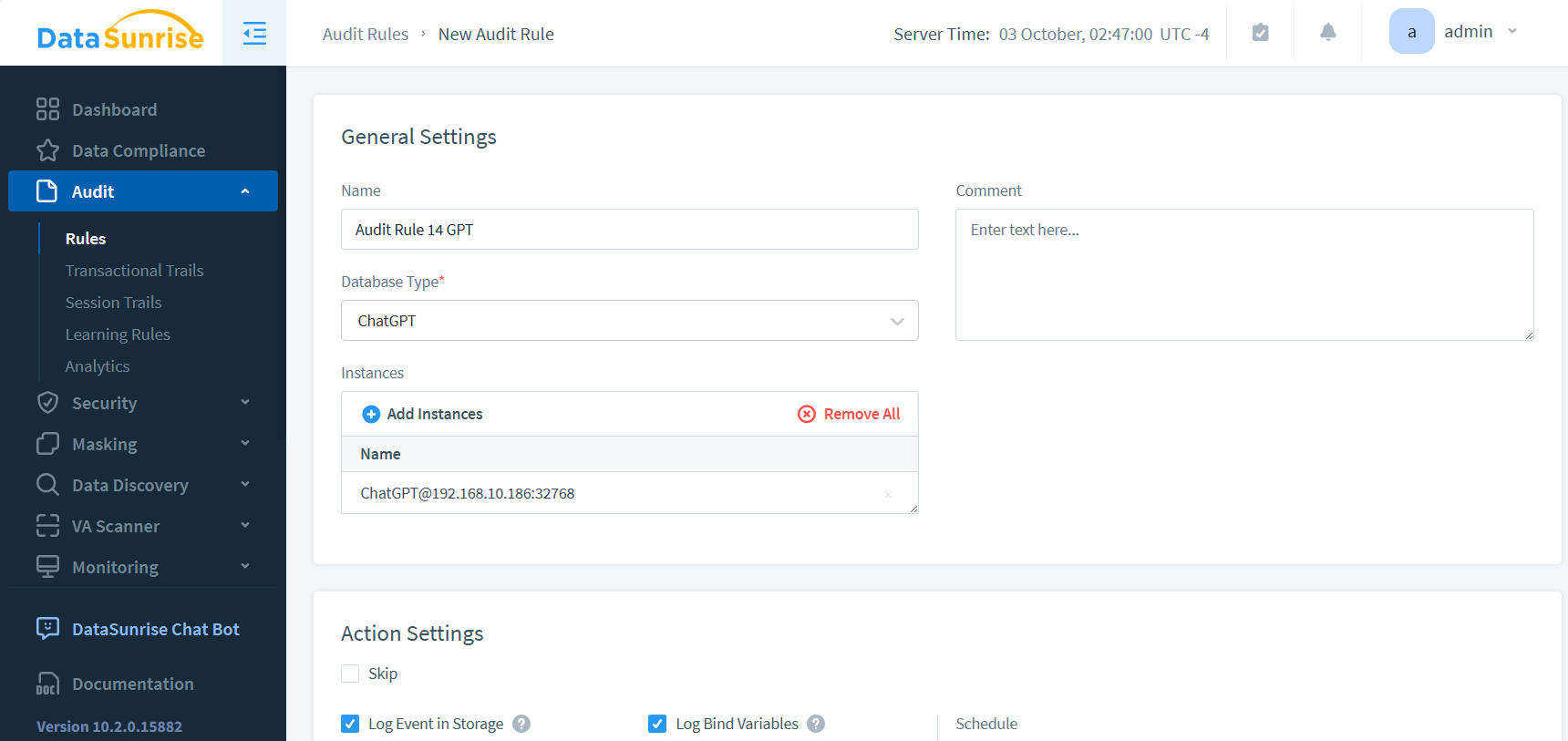

Setting up audit rules is straightforward. In this case, we select the relevant instance, which is not a database but rather ChatGPT, a web application. This process demonstrates the flexibility of the auditing system in handling various types of applications.

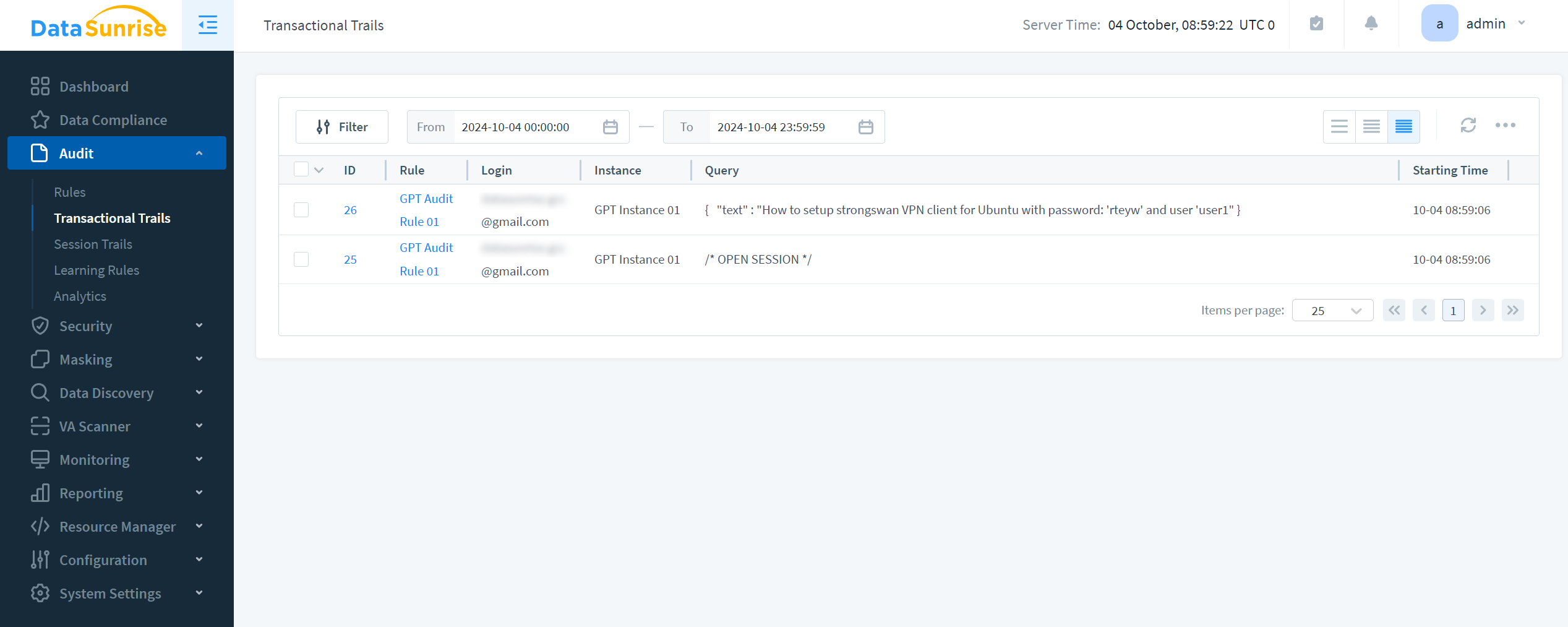

The audit results and the corresponding GPT prompt are as follows:

Conclusion: Using AI with Confidence

Generative AI has moved beyond experimentation and into daily operations. It now powers analytics engines, automation frameworks, and decision-support tools across multiple sectors. As its influence grows, organizations must implement structured auditing, monitoring, and security mechanisms to ensure AI systems behave consistently, securely, and in accordance with internal policies and regulatory obligations.

Effective AI governance requires more than policy statements—it demands enforceable technical controls. Ongoing audit trails, context-aware activity monitoring, and fine-grained access restrictions create transparency around how models retrieve, process, and generate data. This level of oversight minimizes compliance exposure and operational risk while still enabling innovation to advance at speed.

Sustainable AI adoption ultimately depends on trust. When privacy safeguards, auditability, and security enforcement are embedded directly into AI workflows, organizations can scale generative technologies without compromising compliance, data integrity, or stakeholder confidence.

DataSunrise: A Comprehensive AI Security Platform

DataSunrise provides a unified architecture for protecting AI environments by integrating auditing, real-time monitoring, and data protection functions within a single centralized platform. This model ensures controlled data access and continuous oversight across databases, cloud infrastructure, and AI-powered applications operating in modern enterprise ecosystems.

Built to support the evolving security requirements of generative AI systems, the platform helps detect abnormal activity, enforce governance policies, and minimize the risk of sensitive data exposure as AI workloads and deployments expand.

Discover how DataSunrise enhances AI governance and safeguards critical data by reviewing our AI security solutions or requesting a live demo to explore the platform in action.